|

Primary

Contacts:

Mike Patrick

1803 Baugher Avenue

Elizabethtown, PA 17022

610.209.3529 (cell)

|

|

Dan Fenton

667 Baugher Avenue

Elizabethtown, PA 17022

484.888.6606 (cell)

|

|

Recent

Sightings:

Semester meetings dates

and locations will be posted soon.

Recruiting presentation

dates and locations will be posted as they become approved.

|

|

Want to Learn More!

Click here

for details about how the Wunderbot works.

|

|

|

|

|

Vision System 2008

Project #2

for

CS434: Artificial Intelligence & Robotics

James Painter '08

After the vision system's subpar

performance at IGVC 2006, it stands as a primary subsystem for

improvement in preparation for Wunderbot IV's entry to IGVC 2008. Here,

I will explain the changes I have begun to make and the promising

results.

(Click to expand each section)

Problem

|

IGVC Autonomous Challenge: Traverse a path bounded by two parallel

spraypainted white lines separated by ten feet.

|

Strategy

|

Simulate stereo vision by dividing the single view vertically into a

left and right half. Search for the presence of the line on either

side. If found on the left, calculate the distance away from the robot

by measuring the vertical position in the viewing screen, and then

decrease the speed of the right wheel by an amount proportional to the

proximity of the line. And vice versa for the right side.

|

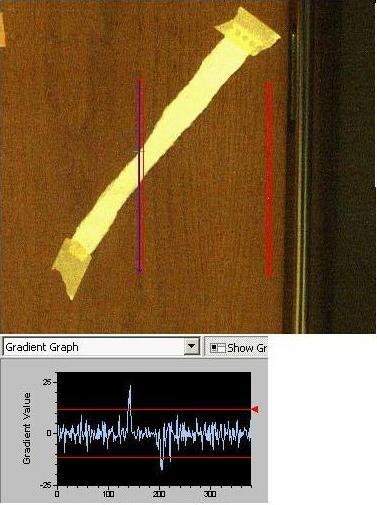

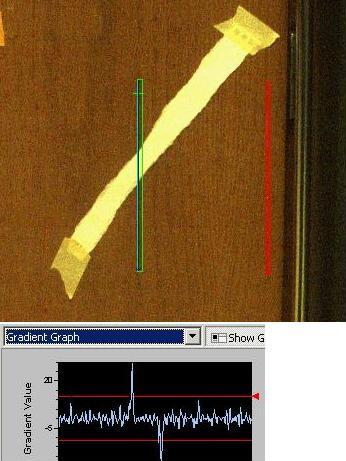

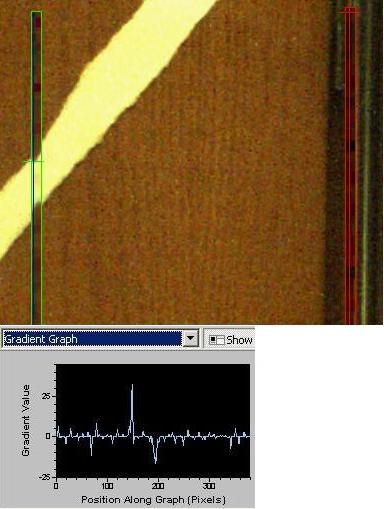

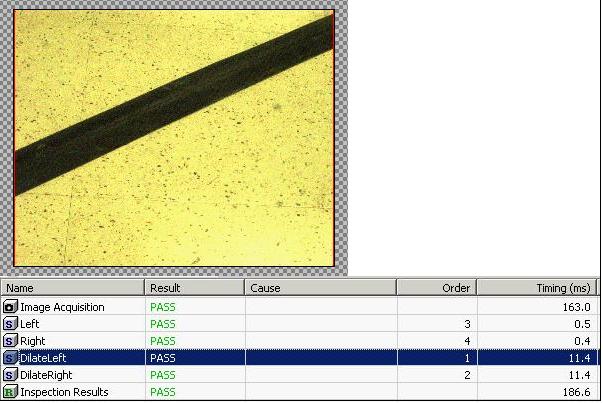

Line Detection

|

The proprietary

software packaged with the camera contains powerful built-in image

processing functions. In order to more accurately find the line,

pre-process the image using a filter. Many choices were available, and

I found these three to be effective:

- De-Noise

- Dilate

- Explicit convolution filter

Some preliminary testing showed the benefits of using a dilation filter

to eliminate small holes.

Gradient on left side using no filter:

Gradient on left side using 3x3 dilation filter:

Gradient on left side using 9x9 dilation filter:

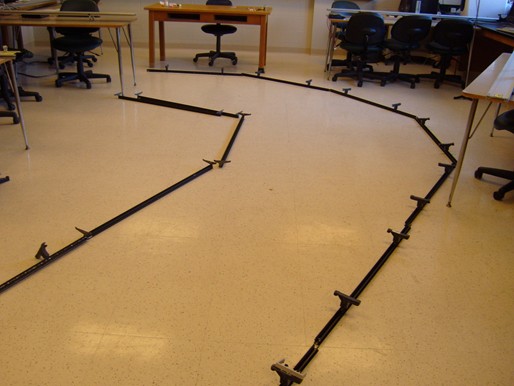

Test course:

De-noising is most effective for our

given surface of speckled flooring and allowed me to achieve over 90%

accuracy. The filter was applied strictly to the one-pixel-wide line

detection region only, in order to keep processing time as low as

possible.

|

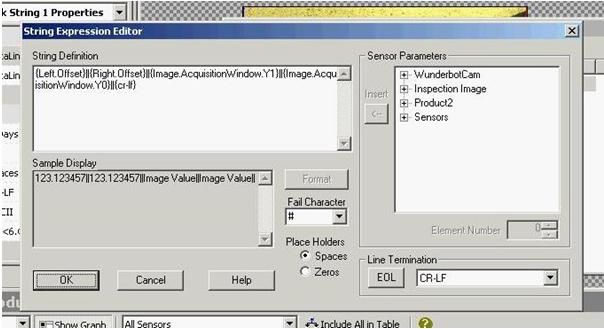

TCP/IP Send String

|

|

Every 50ms, a data string is sent

through TCP/IP to LabView containing a customized series of four

numbers separated by "||". The first two are the y-coordinates of the

top and bottom pixels in the viewable region. In order to conserve

time, the viewable region can be cropped. In my demonstration, however,

I use the entire view, making these first two commands 1279 and 1023.

The second two numbers sent are the y-coordinate of a line found on the

left and right side respectively. If none is found, it defaults to

1023.

|

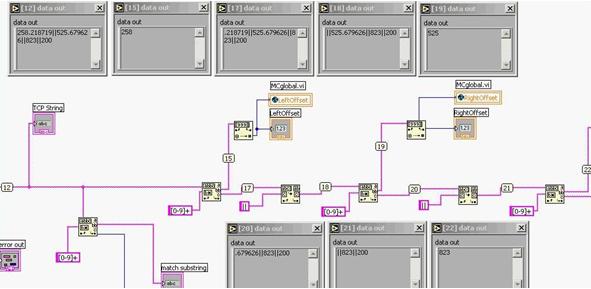

LabVIEW TCP/IP Parsing

|

A camera VI in LabVIEW is continually running in a while loop to check

for the camera's TCP/IP string. Once detected, it then parses the

string back into its individual parts using a sequence of

"match-string" controls. The values are separated and put into global

variables for the main motor control VI.

|

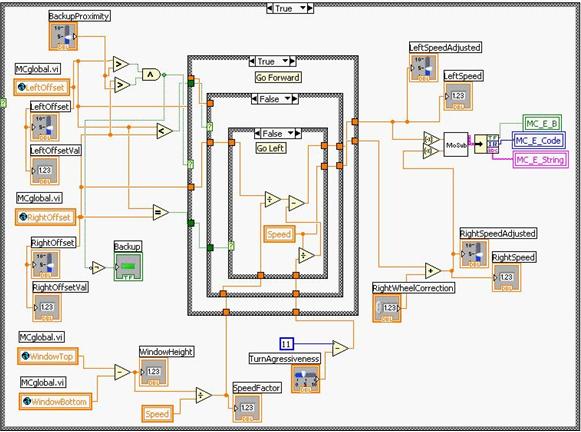

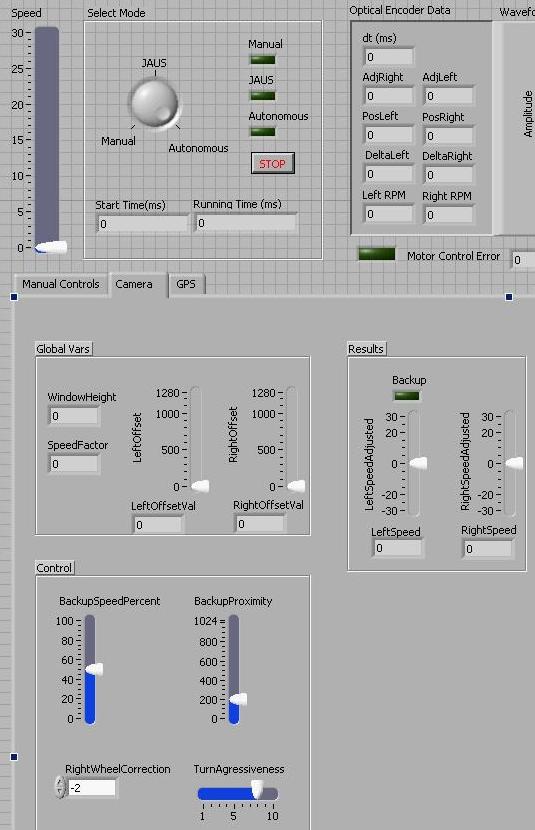

LabVIEW Motor Control

|

Within the motor

control VI is contained the logic for the robot to intelligently avoid

lines, based on the location of the lines sent from the camera. It

checks to see if lines detected on the left or the right are closer, if

present at all. The speed of the wheel on the opposite side is then

decreased. The amount of decrease is calculated based on the y-position

of the detected line, where lower means closer to the robot. Also

factored into the calculation is an "TurnAggressiveness" factor, a

value between 1 and 10 determined by the user. If the line is located

within a certain "BackupProximity" (user-determined), then the robot

halts its forward progress and runs in reverse until the line is found

outside that given range.

|

Miscellaneous Factors

|

- Due to some weight imbalance, the

robot does not drive straight when sent identical speed commands to the

motor controllers. Thus, a turning correction factor is added to one

wheel at all times.

- The robot is seen slowing down

while turning. This is due to the resistance of the misaligned casters.

Constant force is applied by the motor controllers at all times, but

there is a force required to overcome the casters, which takes away

from the robots forward speed.

- All these factors will be corrected

once closed-loop control is implemented. The optical encoders will be

able to detect when the robot is not making the exact forward progress

specified (accurate to 17mm), and will increase/decrease the force

applied by the motor controllers accordingly.

|

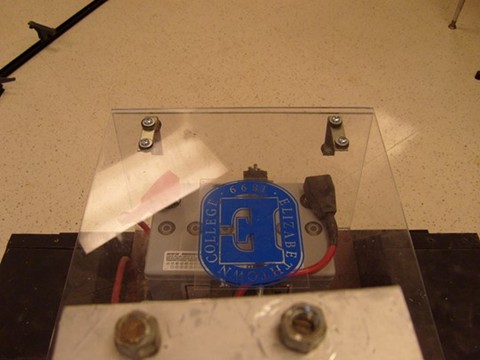

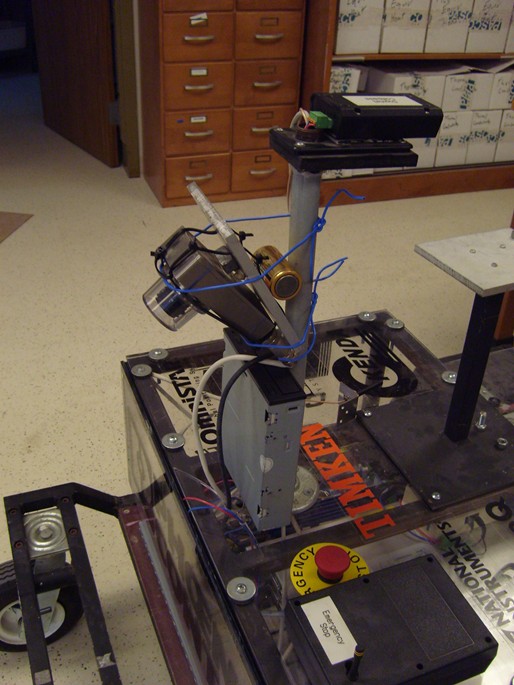

Structural Modifications

|

The orientation of

the robot was reversed (front=back, back=front) for three reasons.

- The original camera position left

the viewing range obstructed by the batteries, and the camera could not

see the region of about two feet directly in front of the wheels. With

the camera on the rear (now front), the viewing region to the wheels

was unobstructed.

Old View:

New View:

- When approaching a line very

closely and having to make a sharp turn, the front wheels would act as

the pivot and the rear of the robot would swing around, often

unconsciously right over the boundary line. With the new orientation,

the front wheels directly under the camera are swung, which makes the

robot's swinging footprint more predictable.

- The the new orientation has a

convenient, flat mounting area on the front bumper. This makes a nice

place for the laser range-finders that will soon be integrated into the

Wunderbot.

After the robot was programmed to run

in reverse, the camera position still needed some adjusting before it

could see directly in front of the wheels. Thus, it was raised on the

digital compass pole about eight inches, and its downward tilt angle

was increased to about 70 degrees.

New state-of-the-art camera mount:

|

Demonstration

|

| Video

Link |

|

|